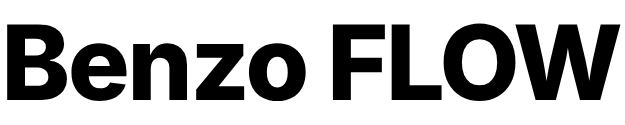

How Numbers Were Invented? 40,000 Years Of Counting History

We use numbers constantly to check the time, pay for coffee, or measure ingredients, often without a second thought. In 2026, the decimal system (0–9) feels as natural as breathing. But these digits didn’t just appear out of thin air. They are the result of thousands of years of human ingenuity, trial, and error.

Numbers have a biography that stretches from the dawn of civilization to the modern smartphone in your pocket. In this post, we’ll journey back in time to explore how humanity learned to count, how symbols evolved into systems, and how we arrived at the mathematical language we speak today.

Numbers originated around 40,000 years ago among early hunter-gatherer tribes, who used simple methods such as fingers, stones, and tally marks to track food and resources.

As civilizations advanced, more sophisticated systems emerged, including the Babylonians’ base-60 system (sexagesimal) and the Egyptians’ base-10 system (Hieroglyphic).

These ideas evolved further with the invention of zero and the Hindu-Arabic numeral system, which spread globally and forms the foundation of modern mathematics.

Now, let’s dive deep into this.

What Are Numbers Anyway?

Numbers are abstract ideas that we represent with symbols to count objects, measure size or amount, and describe order (like first, second, third). In the earliest stages of human life, people didn’t have written numbers, so they used simple, practical methods such as counting on fingers, placing stones in piles, or making tally marks to keep track of food, animals, and other resources.

As societies grew larger and more organized, these basic methods became limiting. Trade, construction, taxation, and astronomy all required greater precision and consistency. To meet these needs, humans developed more structured and efficient number systems. These systems made it possible to perform accurate calculations, record large quantities, and manage increasingly complex aspects of daily life.

Are Numbers Discovered Or Invented?

The nature of the answer would depend on which perspective the answer is given from. Like (W and M) or (6 and 9), both have the same shape. It just changes depending on the point of view you take.

Discovered: On one hand, numbers feel discovered because they describe real patterns that exist in nature. For example, if you have three apples, that “three-ness” exists whether or not humans name it. Mathematical relationships, such as patterns in geometry or physics, seem to be part of the universe itself, waiting to be found.

Invented: On the other hand, numbers are also invented because humans created the symbols and systems we use to represent them. The digits (0–9), number names, and different number systems (such as Roman numerals or the decimal system) are all human-made tools designed to make counting and calculation easier.

Personally, I think there’s a bit of a grey area in this question. On the one hand, we discovered underlying concepts such as quantities and patterns that already exist in nature. On the other hand, we invented the language used to describe them, including symbols and number systems. So, numbers are not purely discovered or invented. Rather, they are a blend of natural reality and human creativity.

| Perspective | Key Example | Origin |

| Discovered | Primes, infinity patterns | Prehistoric tallies “found” quantities |

| Invented | Zero, decimals | Babylonian/Indian innovations |

| Both | Positional systems | Evolved from needs, universal logic |

Counting Vs Numbers

Counting is the basic process of determining how many items exist in a collection, usually by listing them one by one (1, 2, 3…), or by matching each item to a unique marker, such as pointing to objects while counting. It is a practical activity rooted in one-to-one correspondence, and early humans relied on simple methods like fingers, stones, or tally marks to keep track of quantities.

Numbers, by contrast, are abstract symbols and concepts that represent those quantities independently of the objects being counted. For example, you might count five sheep, but the number “5” can also represent five stars, five apples, or any other group. This ability to generalize makes numbers far more powerful, as they allow us not only to count but also to measure, compare, and perform complex calculations.

| Aspect | Counting | Numbers |

| Nature | Action or process | Abstract tools/symbols |

| Origin | Finger tallies ~ 40k years ago | Evolved symbols (e.g., Babylonian base-60) |

| Use | Matches items one-by-one | Quantifies, measures, calculates |

| Example | Touch each fish: 1, 2, 3… | Write “3 fish” for reuse |

In short, counting is the action, while numbers are the abstract system that makes counting, mathematics, and advanced reasoning possible. As human societies grew and became more complex, simple counting methods were no longer sufficient, leading to the development of structured number systems capable of handling trade, science, and large-scale problem-solving.

History Of Numbers

Humans likely began counting through simple, intuitive methods tied to survival needs. Like tracking animals, days, or possessions. The most ancient and universal calculator is the human hand. This is why Base-10 (decimal) is the standard for most of the world today, we have ten fingers. The earliest evidence dates to tally marks on bones and stones from the Paleolithic era, dating back 40,000 to 50,000 years.

To better understand the development of the number system, I have divided its evolution into 9 phases, spanning from the prehistoric era to the modern day. This structured approach provides a clear overview of how numbers have gradually evolved, reflecting the growing complexity of human thought and civilization.

Phase 1 – Prehistoric Beginnings (40,000 BCE)

Early humans relied on body-tallying and environmental tokens by using fingers, knuckles, and toes, which allowed for a portable “base” system (often base-10 or base-20). When quantities exceeded the body’s capacity, they turned to wood, bone, and stone.

These tallies acted as an “external memory,” allowing a tribe to track elapsed time, seasonal migrations, or communal resources without the cognitive burden of memorizing large totals.

The first physical evidence of counting comes from tally sticks. These were bones or pieces of wood with notches carved into them to keep track of elapsed time, lunar cycles, or quantities of livestock.

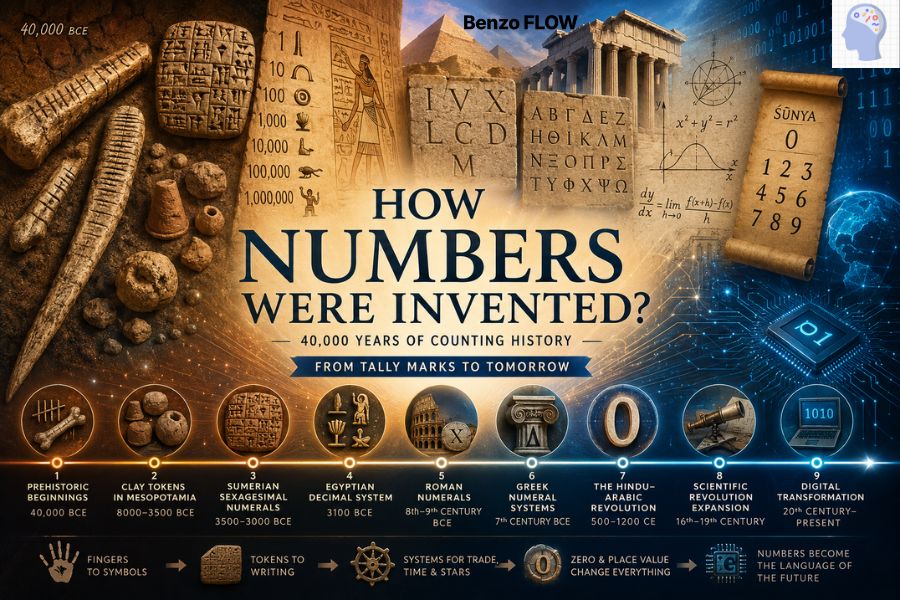

The Lebombo Bone

The Lebombo Bone is a small baboon fibula with 29 notches, discovered in Border Cave in the Lebombo Mountains between South Africa and Eswatini. It dates to about 43,000–42,000 years ago and is widely regarded as one of the oldest known mathematical artifacts, suggesting that early humans in southern Africa were already using marked objects to keep count or record information.

Because the 29 notches closely match the length of a lunar month, many researchers believe the bone may have served as a lunar‑phase or calendar stick, possibly tracking menstrual or seasonal cycles. Others interpret it more conservatively as a tally stick or ritual object, but regardless, it stands as key evidence for early abstract counting and the deep African roots of numerical and time‑tracking practices.

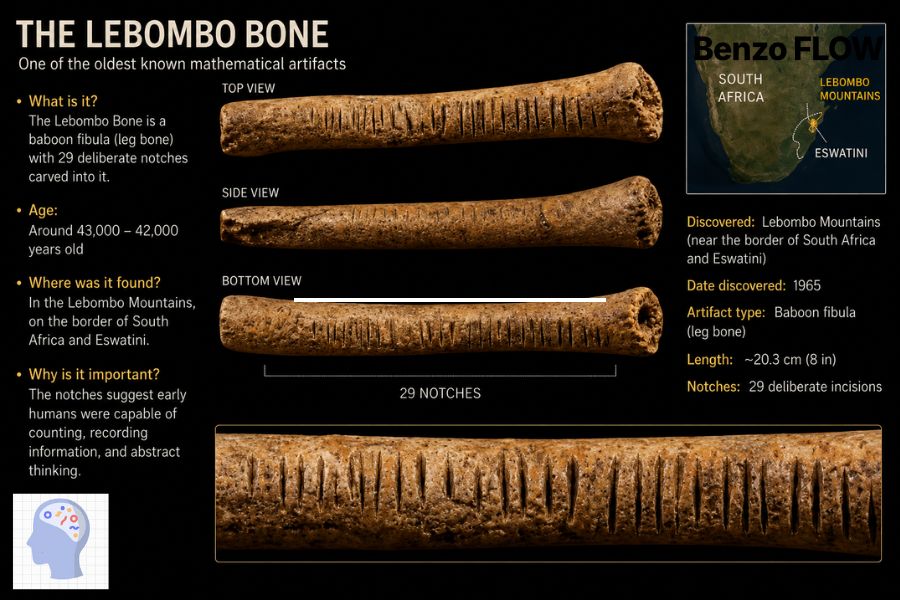

The Ishango Bone

The Ishango Bone is a small, dark‑brown, pencil‑sized bone tool about 10 cm long, discovered near the Semliki River in what is now the Democratic Republic of the Congo in the 1950s. It carries three parallel columns of grouped notches about 168 marks in total, carved more than 20,000 years ago, and is now kept at the Royal Belgian Institute of Natural Sciences in Brussels.

Scholars debate its purpose, with interpretations ranging from a simple tally stick to a possible early mathematical or calendrical device. Some see patterns in the groupings as hints of arithmetic, prime number-like sequences, or a base‑12 counting system, while others suggest it may represent a lunar calendar or a teaching aid.

Whatever its exact use, the Ishango Bone is widely described as one of the oldest known objects that may embody deliberate numerical or mathematical thinking.

Phase 2 – Clay Tokens in Mesopotamia (8000–3500 BCE)

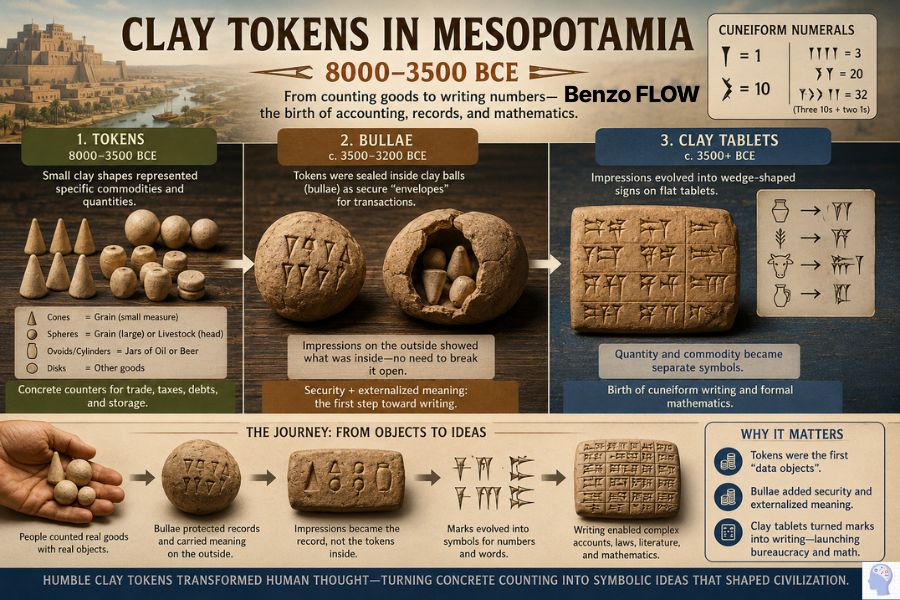

Around 8000–3500 BCE in Mesopotamia (often called the cradle of civilization), human society underwent a major transformation. As people shifted from hunting and gathering to farming, they began producing surplus grain, oil, livestock, and other goods stored in temples and traded between villages.

As granaries filled and trade expanded across villages, human memory and simple tally sticks became obsolete. This created a new problem: how to reliably track storage, trade, taxes, and debts in growing communities. Simple methods like memory or tally marks on bones were no longer enough.

The solution was a revolutionary system of clay tokens that transformed math from a personal memory aid into a powerful social and economic tool.

Small, standardized clay tokens like cones, spheres, disks, cylinders, and other shapes were used. Each represents a specific quantity of a specific commodity. A cone might stand for a measure of grain, a sphere for a head of livestock, and an ovoid for a jar of oil.

- Cones: Small measures of grain.

- Spheres: Large measures of grain or head of livestock.

- Ovoids/Cylinders: Jars of oil or specific quantities of beer.

This system wasn’t abstract like modern numbers; it was concrete and tied directly to real goods. If a farmer owed five jars of oil, he would physically hand over five matching tokens. In this sense, tokens acted as a kind of “physical data” or contract, making trade and accounting more reliable.

At first, these tokens were simply passed from hand to hand as records of debt or delivery. But as trade grew more complex, people needed a way to keep them secure and verifiable. They began sealing tokens inside hollow clay balls called bullae (singular: bulla), the early “envelopes” for transactions.

These acted like tamper-proof containers for economic agreements. However, since the contents couldn’t be seen without breaking them open, a practical innovation emerged: people started pressing the shapes of the tokens onto the outside of the bullae before sealing them. This way, the contents could be “read” without opening the container.

Suddenly, the mark on the clay meant the same thing as the token inside, where a sphere mark represented a spherical token, which in turn represented a head of livestock or a unit of grain. This was the key moment, the move from three‑dimensional (3D) tokens to two‑dimensional (2D) signs on clay. Eventually, people realized the tokens inside the bulla were no longer necessary.

The marks on the outside were enough. Bullae gradually gave way to flat clay tablets, and the impressed shapes began to evolve into stylized symbols.

Instead of drawing five jars of oil, scribes started separating quantity from commodity. A symbol for “5” and a separate sign for “oil.” These impressions gradually evolved into abstract signs, forming the basis of Cuneiform, one of the earliest writing systems. These pressed, wedge‑shaped marks became cuneiform numerals, laying the foundation for both writing and formal arithmetic.

In this way, humble clay tokens used as a practical tool for counting grain and livestock transformed human thought, turning concrete counting into symbolic representation, shaping bureaucracy, trade, and the mathematics that still underpins our world today.

Cuneiform numerals used a vertical wedge (𒁹) for 1 and a chevron (𒌋) for 10, forming additive groups up to 59 (e.g., three 10s + two 1s for 32).

- Tokens were the first “data objects”;

- Bullae added security and began to externalize meaning onto the clay surface;

- Clay tablets turned those marks into proper written numbers and text, launching both bureaucracy and mathematics.

| Feature | Tokens (8000–3500 BCE) | Bullae (c. 3500–3200 BCE) | Clay tablets (c. 3500+ BCE) |

| Form | Small, hand‑molded clay shapes (cones, spheres, cylinders, disks) | Hollow clay balls or spheres enclosing tokens inside | Flat, rectangular clay slabs pressed or inscribed with wedges |

| Material | Molded clay | Molded clay | Molded clay |

| Function | Physical counters representing specific goods and quantities (e.g., a cone = a unit of grain) | Sealed “envelopes” that hide tokens and protect the record | Carrier of permanent numerical and textual records (accounts, lists, laws) |

| How info was read | By counting and inspecting each token (you literally held the numbers) | By breaking the bulla open to see the tokens inside, or later by reading impressions on the outside | By reading impressed cuneiform marks (symbols for numbers and words) |

| Level of abstraction | How the info was read | Transitional: impressions of tokens on the outside make the tokens partly symbolic | Abstract: symbols separate quantity (numeral signs) from item (word signs) |

| Role in history | Earliest systematic counting and accounting, precursor to numerals | “Security envelope” and bridge between token counting and written marks | Birth of formal writing (cuneiform) and structured arithmetic |

Phase 3 – Sumerian Sexagesimal Numerals (3500–3000 BCE)

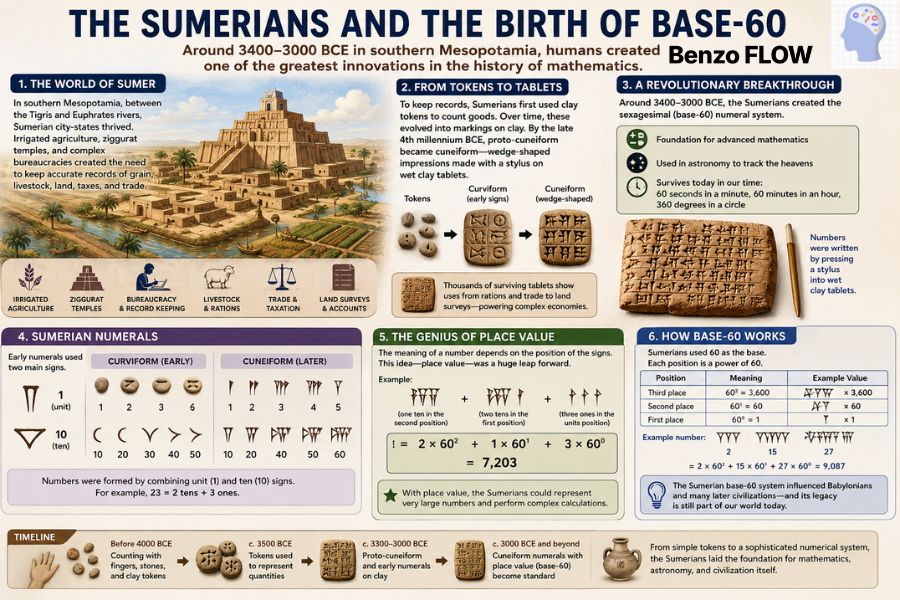

Sumer, in southern Mesopotamia along the Tigris-Euphrates rivers, featured urban centers with irrigated agriculture, ziggurat temples, and bureaucracies that demanded accurate records of grain, livestock, and trade. Proto-cuneiform evolved into numerals for these needs, transitioning from tokens to wedge impressions on clay by the late 4th millennium BCE. Thousands of surviving tablets reveal uses from rations to land surveys, powering complex economies.

Around 3400–3000 BCE, in the fertile lands of southern Mesopotamia (Sumer), humans made one of the most important intellectual breakthroughs in history: the creation of the sexagesimal (base-60) numeral system. Written in early cuneiform script on clay tablets, this system became the foundation for advanced mathematics, astronomy, and even modern timekeeping.

Sumerian numerals were written using a stylus pressed into wet clay tablets. Initially, they used rounded shapes (curviform), but these evolved into the classic cuneiform (wedge-shaped) signs.

The real innovation came when the Sumerians began using place value:

- First position = 1s (60⁰)

- Second position = 60s (60¹)

- Third position = 3600s (60²)

This meant the same symbol could represent different values depending on position. This is one of the earliest known forms of positional notation in human history.

While modern society relies on Base-10 (decimal), the Sumerians chose 60. Though they left no “manual” explaining why, historians point to two compelling reasons:

The “Highly Composite” Advantage

60 is a mathematical powerhouse. It is the smallest number divisible by every number from 1 to 6.

- Divisors of 60: 1, 2, 3, 4, 5, 6, 10, 12, 15, 20, 30.

- Practicality: This made trade and land surveys incredibly simple. Dividing a harvest into thirds, quarters, or fifths resulted in clean, whole numbers, avoiding the “messy fractions” that haunted other early civilizations.

The Finger-Counting Theory

Some scholars believe the system originated from a manual counting method:

- Using the thumb, you can count the 12 knuckles on the four fingers of one hand.

- By using the 5 fingers of the other hand to track each set of 12, you reach $12 times 5 = 60$.

Even though Sumerian civilization disappeared long ago, its numerical system survives in modern life through its Babylonian successors: 60 seconds make up a minute and 60 minutes an hour in our timekeeping system, while geometry still reflects this legacy through the division of a circle into 360 degrees, a choice that works neatly with base-60 because it is equal to 6 × 60 and divides evenly into many useful fractions.

In astronomy, later Mesopotamian scholars built on this system to track planetary motion, calculate lunar cycles, and develop the zodiac, creating a mathematical framework that linked the movements of the heavens with a structured numerical order that continues to influence science and measurement today.

Phase 4 – Egyptian Decimal System (3100 BCE)

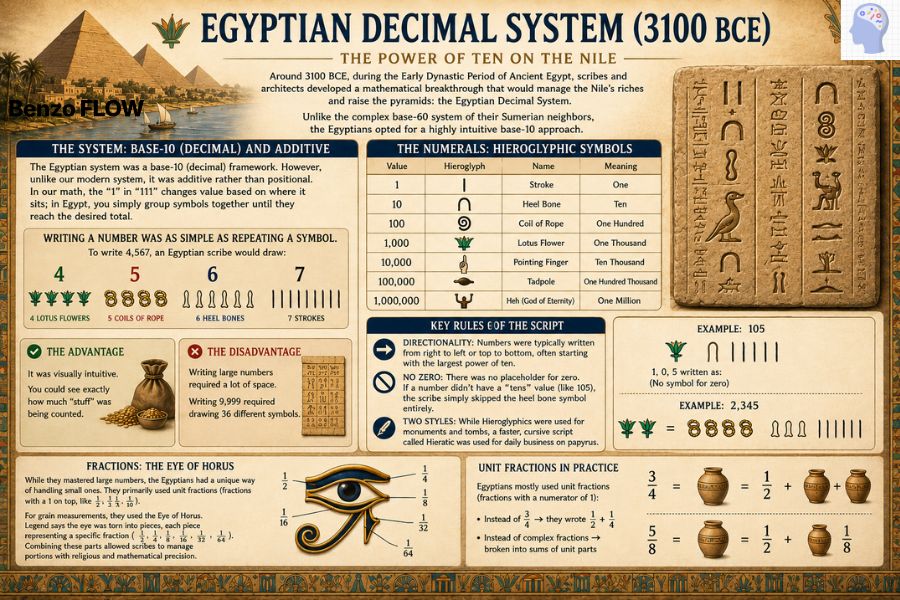

Around 3100 BCE, during the Early Dynastic Period of Ancient Egypt, scribes and architects developed a mathematical breakthrough that would manage the Nile’s riches and raise the pyramids: the Egyptian Decimal System. Unlike the complex base-60 system of their Sumerian neighbors, the Egyptians opted for a highly intuitive base-10 approach.

The Egyptian system was a base-10 (decimal) framework. However, unlike our modern system, it was additive rather than positional. In our math, the “1” in “111” changes value based on where it sits; in Egypt, you simply group symbols together until they reach the desired total.

| Value | Symbol Description | Repetition Example |

|---|---|---|

| 1 | Vertical stroke | (one line) |

| 10 | Cattle hobble/U-loop | 20 = two hobbles |

| 100 | Coil of rope | 300 = three coils |

| 1,000 | Lotus flower/water lily | 2,000 = two lotuses |

| 10,000 | Pointing finger | 20,000 = two fingers |

| 100,000 | Tadpole/frog | 200,000 = two tadpoles |

| 1,000,000 | God Heh (arms raised) | Symbolized “many” |

Writing a number was as simple as repeating a symbol. To write 4,567, an Egyptian scribe would draw:

- 4 Lotus flowers

- 5 Coils of rope

- 6 Heel bones

- 7 Strokes

The pros and cons of it.

- The Advantage: It was visually intuitive. You could see exactly how much “stuff” was being counted.

- The Disadvantage: Writing large numbers required a lot of space. Writing 9,999 required drawing 36 different symbols.

Key Rules of the Script

- Directionality: Numbers were typically written from right to left or top to bottom, often starting with the largest power of ten.

- No Zero: There was no placeholder for zero. If a number didn’t have a “tens” value (like 105), the scribe simply skipped the heel bone symbol entirely.

- Two Styles: While Hieroglyphics were used for monuments and tombs, a faster, cursive script called Hieratic was used for daily business on papyrus.

While they mastered large numbers, the Egyptians had a unique way of handling small ones. They primarily used unit fractions (fractions with a 1 on top, like $1/2, 1/3, 1/10$).

For grain measurements, they used the Eye of Horus. Legend says the eye was torn into pieces, each piece representing a specific fraction (1/2, 1/4, 1/8, 1/16, 1/32, 1/64). Combining these parts allowed scribes to manage portions with religious and mathematical precision.

Egyptians mostly used unit fractions (fractions with a numerator of 1):

- Instead of 3/4 → they wrote 1/2 + 1/4

- Instead of complex fractions → broken into sums of unit parts

Egyptian Decimal System Vs Sumerian Sexagesimal Numerals

| Feature | Egyptian System | Sumerian System |

|---|---|---|

| Base | 10 (decimal) | 60 (sexagesimal) |

| Structure | Additive (non-positional) | Positional (place-value system) |

| Zero | Not used | Later developed a placeholder concept |

| Symbols | Hieroglyphic icons for powers of 10 | Cuneiform wedge marks |

| Complexity | Simple visually, repetitive | More abstract but mathematically powerful |

| Main Use | Administration, engineering, taxation | Astronomy, trade, advanced calculations |

Key Differences Explained

Positional vs. Additive: Sumerian place value was revolutionary; a wedge meant 1, 60, or 3600 by position, like modern systems. Egyptian was purely additive, like Roman numerals (e.g., 276 = two 100-coils + seven 10-hobbles + six strokes).

Divisibility Practicality: Base-60’s factors (1,2,3,4,5,6,10,12,15,20,30) eased dividing goods, lunar cycles, and angles. Base-10 suited Egyptian centralized tallies (taxes, pyramids) but struggled with thirds/fifths

Applications:

- Egypt: Nile floods, pyramid volumes (cubit geometry), and grain taxes were practical for pharaohs.

- Sumer: City-state trade, ziggurats, star tracking enabled advanced tables (roots, squares).

Phase 5 – Roman Numerals (8th-9th Century BCE)

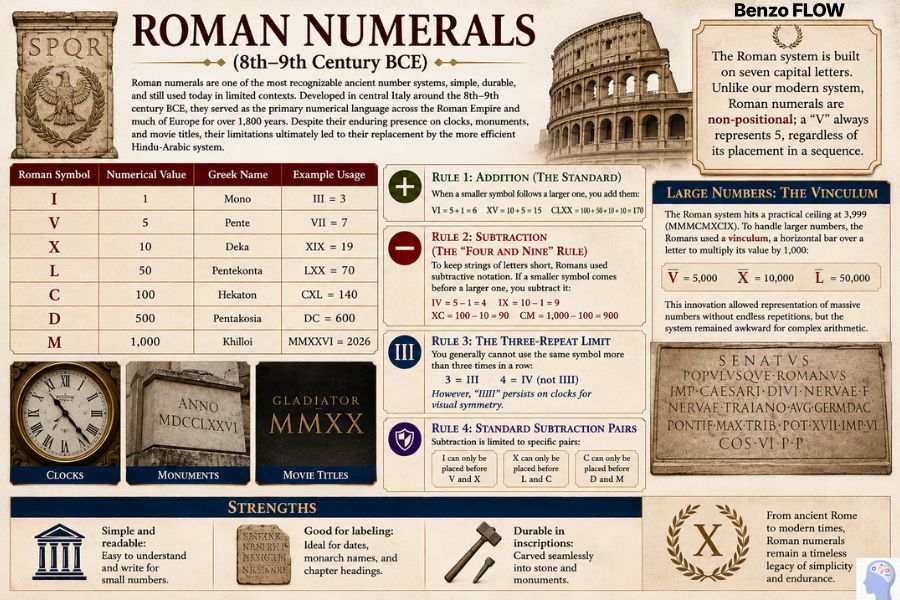

Roman numerals are one of the most recognizable ancient number systems, simple, durable, and still used today in limited contexts. Developed in central Italy around the 8th-9th century BCE, they served as the primary numerical language across the Roman Empire and much of Europe for over 1,800 years.

Despite their enduring presence on clocks, monuments, and movie titles, their limitations ultimately led to their replacement by the more efficient Hindu-Arabic system.

The Roman system is built on seven capital letters. Unlike our modern system, Roman numerals are non-positional; a “V” always represents 5, regardless of its placement in a sequence.

| Roman Symbol | Numerical Value | Greek Name | Example Usage |

|---|---|---|---|

| I | 1 | Mono | III = 3 |

| V | 5 | Pente | VII = 7 |

| X | 10 | Deka | XIX = 19 |

| L | 50 | Pentekonta | LXX = 70 |

| C | 100 | Hekaton | CXL = 140 |

| D | 500 | Pentakosia | DC = 600 |

| M | 1,000 | Khilioi | MMXXVI = 2026 |

Rule 1: Addition (The Standard)

When a smaller symbol follows a larger one, you add them:

- VI = 5 + 1 = 6

- XV = 10 + 5 = 15

- CLXX = 100 + 50 + 10 + 10 = 170

Rule 2: Subtraction (The “Four and Nine” Rule)

To keep strings of letters short, Romans used subtractive notation. If a smaller symbol comes before a larger one, you subtract it:

- IV = 5 – 1 = 4

- IX = 10 – 1 = 9

- XC = 100 – 10 = 90

- CM = 1,000 – 100 = 900

Rule 3: The Three-Repeat Limit

You generally cannot use the same symbol more than three times in a row:

- 3 = III

- 4 = IV (not IIII)

However, “IIII” persists on clocks for visual symmetry.

Rule 4: Standard Subtraction Pairs

Subtraction is limited to specific pairs:

- I can only be placed before V and X

- X can only be placed before L and C

- C can only be placed before D and M

Large Numbers: The Vinculum

The Roman system hits a practical ceiling at 3,999 (MMMCMXCIX). To handle larger numbers, the Romans used a vinculum, a horizontal bar over a letter to multiply its value by 1,000:

- V̅ = 5,000

- X̅ = 10,000

- L̅ = 50,000

This innovation allowed representation of massive numbers without endless repetitions, but the system remained awkward for complex arithmetic.

Strengths

- Simple and readable: Easy to understand and write for small numbers.

- Good for labeling: Ideal for dates, monarch names, and chapter headings.

- Durable in inscriptions: Carved seamlessly into stone and monuments.

Weaknesses

- No zero: No symbol for “nothing,” making algebra impossible.

- No place value: The “I” in III always means 1, not 100 or 1,000.

- Calculation nightmare: Multiplying, dividing, or handling large numbers requires an abacus or conversion to Arabic numerals.

- Not scalable: The system becomes unwieldy for large or complex calculations.

Today, Roman numerals are used primarily for style and tradition:

- Clock faces: I–XII on grandfather clocks.

- Monarchs: King Charles III, Pope Francis.

- Events: Super Bowls, Olympic Games, and movie sequels (Rocky IV).

- Formal outlines: I, II, III in research papers or legal documents.

- Copyrights: Years in movie credits (e.g., MCMXCVIII for 1998).

Roman numerals endure as cultural relics, bridging ancient Rome’s legacy with our modern world, but they remain a symbol of the past, not the future of mathematics.

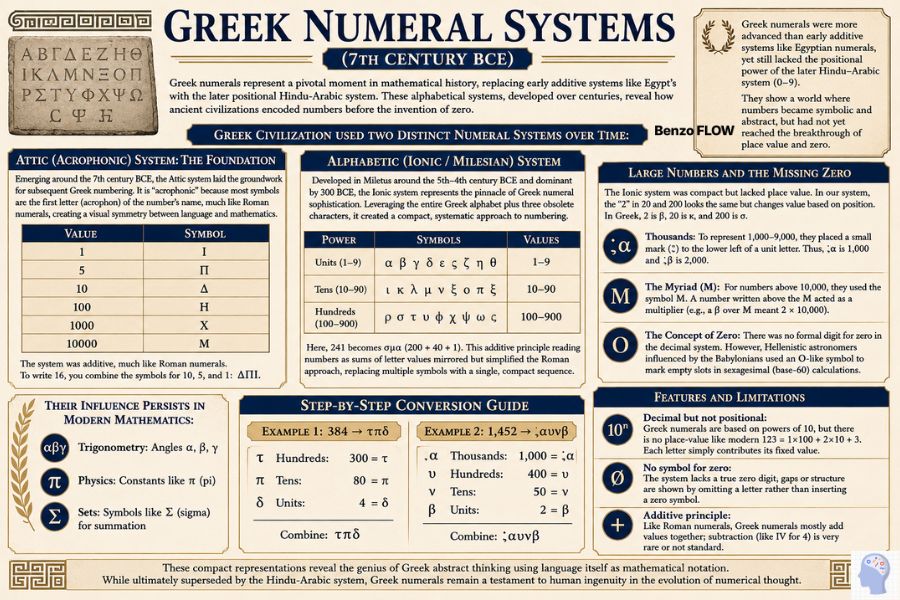

Phase 6 – Greek Numeral Systems (7th Century BCE)

Greek numerals represent a pivotal moment in mathematical history, replacing early additive systems like Egypt’s with the later positional Hindu-Arabic system. These alphabetical systems, developed over centuries, reveal how ancient civilizations encoded numbers before the invention of zero.

Greek numerals were more advanced than early additive systems like Egyptian numerals, yet still lacked the positional power of the later Hindu–Arabic system (0–9).

They show a world where numbers became symbolic and abstract, but had not yet reached the breakthrough of place value and zero.

Greek civilization used two distinct numeral systems over time:

Attic (Acrophonic) System: The Foundation

Emerging around the 7th century BCE, the Attic system laid the groundwork for subsequent Greek numbering. It is “acrophonic” because most symbols are the first letter (acrophon) of the number’s name, much like Roman numerals, creating a visual symmetry between language and mathematics.

| Value | Symbol |

|---|---|

| 1 | Ι |

| 5 | Π |

| 10 | Δ |

| 100 | Η |

| 1000 | Χ |

| 10000 | Μ |

The system was additive, much like Roman numerals. To write 16, you combine the symbols for 10, 5, and 1: ΔΠΙ.

Alphabetic (Ionic / Milesian) System

Developed in Miletus around the 5th-4th century BCE and dominant by 300 BCE, the Ionic system represents the pinnacle of Greek numeral sophistication. Leveraging the entire Greek alphabet plus three obsolete characters, it created a compact, systematic approach to numbering.

| Power | Symbols | Values |

| Units (1-9) | α β γ δ ε ϛ ζ η θ | 1-9 |

| Tens (10-90) | ι κ λ μ ν ξ ο π ϟ | 10-90 |

| Hundreds (100-900) | ρ σ τ υ φ χ ψ ω ϡ | 100-900 |

Here, 241 becomes σμα (200 + 40 + 1). This additive principle reading numbers as sums of letter values mirrored but simplified the Roman approach, replacing multiple symbols with a single, compact sequence.

Large Numbers and the Missing Zero

The Ionic system was compact but lacked place value. In our system, the “2” in 20 and 200 looks the same but changes value based on position. In Greek, 2 is β, 20 is κ, and 200 is σ.

- Thousands: To represent 1,000–9,000, they placed a small mark (͵) to the lower left of a unit letter. Thus, ͵α is 1,000 and ͵β is 2,000.

- The Myriad (M): For numbers above 10,000, they used the symbol M. A number written above the M acted as a multiplier (e.g., a β over M meant 2 × 10,000).

- The Concept of Zero: There was no formal digit for zero in the decimal system. However, Hellenistic astronomers influenced by the Babylonians used an O-like symbol to mark empty slots in sexagesimal (base-60) calculations.

Their influence persists in modern mathematics:

- Trigonometry: Angles α, β, γ

- Physics: Constants like π (pi)

- Sets: Symbols like Σ (sigma) for summation

Step-by-Step Conversion Guide

Example 1: 384 → τπδ

- Hundreds: 300 = τ

- Tens: 80 = π

- Units: 4 = δ

- Combine: τπδ

Example 2: 1,452 → ͵αυνβ

- Thousands: 1,000 = ͵α

- Hundreds: 400 = υ

- Tens: 50 = ν

- Units: 2 = β

- Combine: ͵αυνβ

These compact representations reveal the genius of Greek abstract thinking using language itself as mathematical notation. While ultimately superseded by the Hindu-Arabic system, Greek numerals remain a testament to human ingenuity in the evolution of numerical thought.

Features and limitations

- Decimal but not positional:

- Greek numerals are based on powers of 10, but there is no place‑value like modern 123 = 1×100 + 2×10 + 3. Each letter simply contributes its fixed value.

- No symbol for zero:

- The system lacks a true zero digit; gaps or structure are shown by omitting a letter rather than inserting a zero symbol.

- Additive principle:

- Like Roman numerals, Greek numerals mostly add values together; subtraction (like IV for 4) is very rare or not standard.

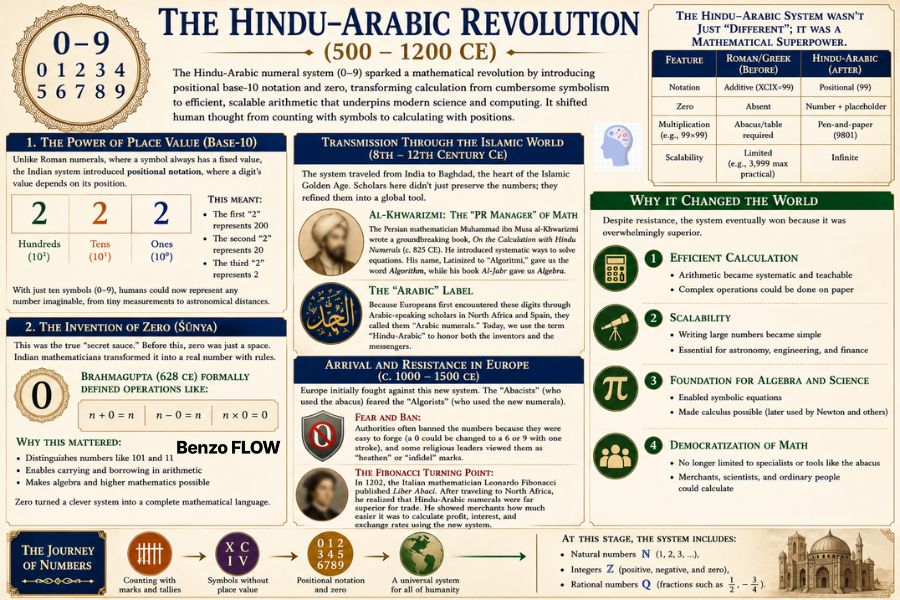

Phase 7 – The Hindu–Arabic Revolution (500 – 1200 CE)

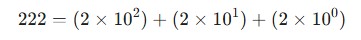

The Hindu-Arabic numeral system (0–9) sparked a mathematical revolution by introducing positional base-10 notation and zero, transforming calculation from cumbersome symbolism to efficient, scalable arithmetic that underpins modern science and computing. it shifted human thought from counting with symbols to calculating with positions.

1. The Power of Place Value (Base-10)

Unlike Roman numerals, where a symbol always has a fixed value, the Indian system introduced positional notation, where a digit’s value depends on its position.

This meant:

- The first “2” represents 200

- The second “2” represents 20

- The third “2” represents 2

With just ten symbols (0–9), humans could now represent any number imaginable, from tiny measurements to astronomical distances.

2. The Invention of Zero (Śūnya)

This was the true “secret sauce.”

Before this, zero was just a space. Indian mathematicians transformed it into a real number with rules.

Brahmagupta (628 CE) formally defined operations like:

- n + 0 = n

- n − 0 = n

- n × 0 = 0

Why this mattered:

- Distinguishes numbers like 101 and 11

- Enables carrying and borrowing in arithmetic

- Makes algebra and higher mathematics possible

Zero turned a clever system into a complete mathematical language.

Transmission Through the Islamic World (8th – 12th Century CE)

The system traveled from India to Baghdad, the heart of the Islamic Golden Age. Scholars here didn’t just preserve the numbers; they refined them into a global tool.

- Al-Khwarizmi: The “PR Manager” of Math: The Persian mathematician Muhammad ibn Musa al-Khwarizmi wrote a groundbreaking book, On the Calculation with Hindu Numerals (c. 825 CE). He introduced systematic ways to solve equations. His name, Latinized to “Algoritmi,” gave us the word Algorithm, while his book Al-Jabr gave us Algebra.

- The “Arabic” Label: Because Europeans first encountered these digits through Arabic-speaking scholars in North Africa and Spain, they called them “Arabic numerals.” Today, we use the term “Hindu-Arabic” to honor both the inventors and the messengers.

Arrival and Resistance in Europe (c. 1000 – 1500 CE)

Europe initially fought against this new system. The “Abacists” (who used the abacus) feared the “Algorists” (who used the new numerals).

- Fear and Ban: Authorities often banned the numbers because they were easy to forge (a 0 could be changed to a 6 or 9 with one stroke), and some religious leaders viewed them as “heathen” or “infidel” marks.

- The Fibonacci Turning Point: In 1202, the Italian mathematician Leonardo Fibonacci published Liber Abaci. After traveling to North Africa, he realized that Hindu-Arabic numerals were far superior for trade. He showed merchants how much easier it was to calculate profit, interest, and exchange rates using the new system.

The Hindu-Arabic system wasn’t just “different”; it was a mathematical superpower.

| Feature | Roman/Greek (Before) | Hindu-Arabic (After) |

| Notation | Additive (XCIX=99) | Positional (99) |

| Zero | Absent | Number + placeholder |

| Multiplication (e.g., 99×99) | Abacus/table required | Pen-and-paper (9801) |

| Scalability | Limited (e.g., 3,999 max practical) | Infinite |

Why It Changed the World

Despite resistance, the system eventually won because it was overwhelmingly superior.

1. Efficient Calculation

- Arithmetic became systematic and teachable

- Complex operations could be done on paper

2. Scalability

- Writing large numbers became simple

- Essential for astronomy, engineering, and finance

3. Foundation for Algebra and Science

- Enabled symbolic equations

- Made calculus possible (later used by Newton and others)

4. Democratization of Math

- No longer limited to specialists or tools like the abacus

- Merchants, scientists, and ordinary people could calculate

At this stage, the system includes:

• Natural numbers ℕ (1, 2, 3, …),

• Integers ℤ (positive, negative, and zero),

• Rational numbers ℚ (fractions such as 1/2, −3/4).

Phase 8 – Expansion with Scientific Revolution (16th – 19th Century)

The Scientific Revolution transformed the Hindu-Arabic decimal system from a merchant’s tool into the backbone of modern science, enabling precise measurements of the universe’s infinite scales. This era fused arithmetic with discovery, expanding numbers to handle motion, space, and the abstract.

Europe’s 16th-century shift from medieval dogma to empirical inquiry demanded better math for astronomy, navigation, and engineering. Hindu-Arabic numerals provided the base, but fractions and Roman systems slowed complex work like planetary tracking. Scientists extended numbers to conquer these limits, powering global voyages and industry.

1. The Death of the Fraction: The Decimal Revolution

By the late 1500s, Europe was still struggling with cumbersome fractions. If you wanted to calculate 1/8 + 1/3, it was a headache.

- Simon Stevin (1585): The Flemish mathematician published De Thiende (“The Tenth”), where he argued that all weights, measures, and currencies should be divided into tenths. He introduced a systematic way to represent fractions as “decimal parts.”

- The Decimal Point: While Stevin’s notation was clunky (he used circled numbers to indicate positions), it paved the way for the modern decimal point. This allowed scientists to calculate with a level of precision, especially in astronomy, that was previously impossible.

2. Logarithms: Simplifying the Infinite

As the Scientific Revolution took hold, astronomers like Johannes Kepler were drowning in manual calculations. Multiplying massive numbers (like the distance between planets) took weeks.

- John Napier (1614): Napier invented Logarithms. This mathematical shortcut allowed complex multiplication to be performed through simple addition.

- The Slide Rule: Based on logarithmic scales, the slide rule became the world’s first “analog computer.” For the next 350 years, until the 1970s, it was the primary tool used to design everything from bridges to Rocket engines.

3. Marriage of Geometry and Algebra where numbers meet space

Perhaps the most significant expansion of the number system occurred in 1637 when René Descartes published La Géométrie.

- The Coordinate Plane: Descartes realized he could represent a point in space using a pair of numbers (x, y). This “Analytical Geometry” meant that shapes (geometry) could be solved using equations (algebra).

- Negative Numbers: While previously treated with suspicion (often called “false numbers”), the coordinate plane made negative numbers essential. They were no longer just “debts”; they were directions on a grid.

4. The Calculus Explosion (Late 17th Century)

The Scientific Revolution reached its peak with Isaac Newton and Gottfried Wilhelm Leibniz. They needed a way to measure change (like the speed of a falling apple at a specific millisecond).

- Infinitesimals: To solve this, they expanded the number system conceptually to include “infinitely small” values.

- Calculus: This new branch of math allowed scientists to model planetary orbits, fluid dynamics, and the laws of motion. It proved that the Hindu-Arabic system was robust enough to describe the continuous flow of time and space.

5. Rigor and the “Imaginary” (18th – 19th Century)

As the 1800s approached, mathematicians began exploring numbers that defied “common sense.”

- Complex Numbers: Scholars like Leonhard Euler and Carl Friedrich Gauss popularized the use of i (the square root of -1). While called “imaginary,” these numbers became vital for describing waves, electricity, and magnetism.

- The Real Number Line: By the late 19th century, mathematicians like Richard Dedekind finally gave the number system a rigorous logical foundation, defining “Real Numbers” (which include both rational numbers like 1/2 and irrational numbers like pi).

Notation and Measurement Standardization

Notation and measurement standardization developed through the widespread use of symbols such as +, −, ×, and ÷, along with variables like x and y, which streamlined mathematical notation by the 1700s.

The metric system’s decimal base, introduced in the 1790s, unified measurements and supported the growth of factories and railroads. This increasing precision also contributed to imperial expansion, influencing technologies and industries ranging from telegraphs to battleships.

| Innovation | Key Figure(s) | Impact |

| Decimals | Stevin (1585) | Precision in science |

| Logarithms | Napier (1614) | Fast calculations |

| Coordinates | Descartes (1637) | Space via numbers |

| Calculus | Newton/Leibniz | Motion modeling |

| Complex | Euler/Gauss | Electricity, waves |

Phase 9 – The Digital Transformation (20th Century – Present)

While the Scientific Revolution taught numbers how to describe the physical laws of the universe, the Digital Transformation taught numbers how to become the world. In this era, the Hindu-Arabic system underwent a radical metamorphosis: transitioning from ink on paper to pulses of electricity.

This is the story of how 10 digits became 2, and how those 2 digits built the modern age.

1. The Great Translation: From Decimal to Binary

For millennia, humans used base-10 (decimal) because we have ten fingers. However, electronic machines require a more reliable language.

- Claude Shannon (1937): In one of the most important master’s theses in history, Shannon proved that Boolean logic (True/False) could be mapped onto electrical circuits.

- The Binary Switch: By using Binary (Base-2), computers represent all information using only two states: 0 (Off) and 1 (On).

- The Bit: This “Binary Digit” became the fundamental unit of information. While we still interact with computers using decimal numbers, the hardware translates every keystroke into long strings of binary code to process logic.

2. The Rise of the Algorithm: Numbers as Instructions

In the mid-20th century, numbers evolved from static values into active processes.

- The Transistor (1947): This tiny component replaced bulky vacuum tubes, allowing for the miniaturization of logic gates. Computers shrank from room-sized giants like ENIAC to pocket-sized smartphones.

- Self-Executing Logic: The concept of the “Algorithm” pioneered by Al-Khwarizmi in the 9th century was revived. Numbers were no longer just the results of a calculation; they became the instructions (code) that told the machine what to do next.

3. Digitization: The Universal Language of Media

The most “invisible” part of this era is the conversion of our physical senses into numerical data.

- Sampling and Pixels: To a computer, a song is a list of numerical frequencies sampled thousands of times per second. A digital photo is a Matrix of Pixels, where every coordinate (x, y) is assigned a numerical value for Red, Green, and Blue (RGB).

- Encoding Reality: Text (via Unicode), sound, and video are all stored as Hindu-Arabic numerals in binary form. We have essentially translated the physical world into a massive, computable spreadsheet.

4. Big Data and Cryptography: Numbers as Power

By the 21st century, numbers became the primary assets of the global economy.

- Datafication: Every “click” or “like” is a numerical data point. Through Big Data, AI analyzes these patterns to predict human behavior.

- The Blockchain: Money has moved from physical gold to Cryptographic Ledgers. Using advanced number theory and prime numbers, cryptocurrencies like Bitcoin secure value through math rather than through central banks.

5. The AI and Quantum Frontier (The 2026 Perspective)

Today, we are pushing the number system into a realm that mirrors human thought.

- Neural Networks: AI systems like ChatGPT process “weights” and “biases” trillions of decimal calculations per second (FLOPs) to simulate intelligence.

- Quantum Computing: We are moving beyond binary. While a standard bit is 0 or 1, a Qubit can exist in a “superposition” of both. This allows for exponential processing power that could solve mathematical problems previously thought impossible.

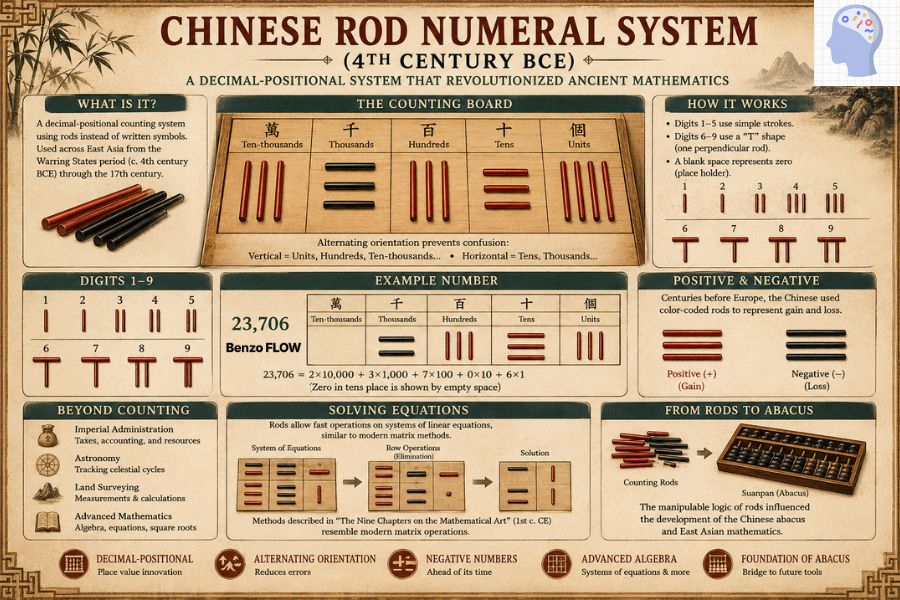

Some numeral systems, like Chinese rod numerals and Brahmi numerals, were also developed in parallel.

Chinese Rod Numeral System (4th Century BCE)

Long before the modern calculator or the European abacus, ancient East Asia was solving complex algebraic equations using a tactile, high-speed system known as Chinese Rod Numerals (chóuzhuàn). Used from the Warring States period (c. 4th century BCE) through the 17th century, these small bamboo, ivory, or wooden rods were more than just counters; they represented a revolutionary leap in decimal-positional logic.

How the “China Rod” Worked

Instead of writing numbers with ink, mathematicians arranged rods on a checkered counting board. The system was a “decimal-positional” hybrid, meaning the value of a digit changed based on its location, just like our numbers today.

To prevent errors, the system used a brilliant alternating orientation:

- Vertical Rods: Used for units, hundreds, and ten-thousands.

- Horizontal Rods: Used for tens, thousands, and so on.

For digits 1–5, scribes used simple strokes (e.g., three vertical rods for 3). For 6–9, they used a “T” shape: one rod placed perpendicular to the others (e.g., one horizontal rod over one vertical rod for 6). This bi-orientation allowed for instant visual distinction between columns without needing a written symbol for zero, and a space on the board simply sufficed as a placeholder.

Beyond basic counting, rod numerals were built for speed and advanced calculation. They were the primary tool for imperial administration, used to calculate taxes, track astronomical cycles, and conduct land surveys.

- Positive and Negative Logic: Centuries before Europe accepted the concept of negative numbers, Chinese mathematicians were using color-coded rods: red for positive (gain) and black for negative (loss).

- Advanced Algebra: Using these rods, scholars could solve systems of linear equations and extract square roots. These methods, documented in the classic text The Nine Chapters on the Mathematical Art (1st century CE), bear a striking resemblance to modern matrix operations.

- The Bridge to the Abacus: The physical manipulability of the rods eventually influenced the creation of the suanpan (abacus) and Japanese wasan mathematics.

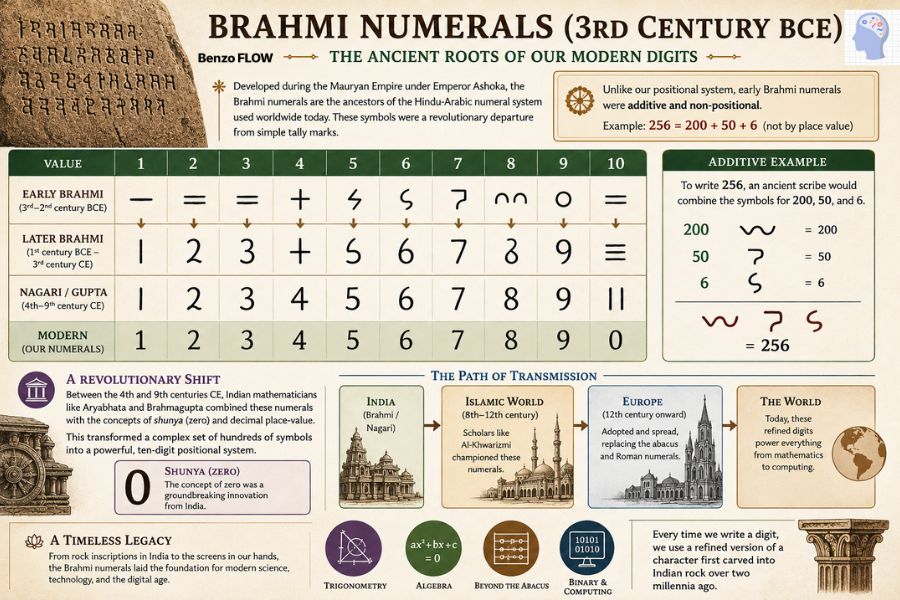

Brahmi Numerals (3rd Century BCE)

The Brahmi numerals, which emerged around the 3rd century BCE during the Mauryan Empire under Emperor Ashoka, are the graphic ancestors of the Hindu-Arabic numeral system used globally today. While we now take our “1-2-3” for granted, these symbols were a revolutionary departure from simple tally marks.

Originally, the Brahmi system was additive and non-positional; it utilized distinct symbols for units (1–9), tens (10–90), hundreds, and thousands. To write a number like 256, an ancient scribe would not rely on place value but would instead combine the specific signs for 200, 50, and 6, a method conceptually similar to Roman numerals but utilizing a unique, cursive-leaning script.

The visual evolution of these characters offers a fascinating glimpse into how our modern digits took shape. The early Brahmi “1,” “2,” and “3” began as simple horizontal strokes which, over centuries of rapid transcription, rotated and connected to become the vertical “1” and the curved “2” and “3” we recognize today. Other digits, like the cross-shaped “4” and the hooked “7,” bear a striking resemblance to their contemporary counterparts even in their earliest forms.

As the script transitioned through the Gupta and Nagari eras (4th to 9th centuries CE), it underwent a profound conceptual shift. Indian mathematicians like Aryabhata and Brahmagupta integrated these symbols with the revolutionary concepts of shunya (zero) and decimal place-value. This transformation streamlined a complex library of hundreds of individual symbols into a sleek, ten-digit system.

The Path of Transmission: These refined numerals traveled from India through the Islamic world, where they were championed by scholars like Al-Khwarizmi, and eventually reached Europe.

By replacing the cumbersome abacus and Roman numerals with this flexible system, the legacy of the Brahmi script powered the “Golden Age” of mathematics, enabling advances in trigonometry, algebra, and, eventually, the binary logic of modern computing. Today, every time we write a digit, we are using a refined version of a character first carved into Indian rock over two millennia ago.

Conclusion

The 40,000-year odyssey of numbers is, at its core, the story of human consciousness. What began as a survival instinct, notching the Lebombo Bone to track lunar cycles, has evolved into a multidimensional language capable of modeling the cosmos. Numbers are the ultimate human invention: a universal lens that allows us to bridge the gap between the physical world and the abstract mind.

As we stand at the threshold of quantum computing and multidimensional logic, the story of numbers is far from over. We are once again expanding our numerical frontiers into new dimensions of complexity that our ancestors could never have imagined.

The next time you check the time, pay for a coffee, or look at a GPS coordinate, remember: you aren’t just using a tool. You are participating in a global legacy that began with a single scratch on a prehistoric bone. Numbers didn’t just help us count the world, they helped us build it.